I had the pleasure of spending a significant amount of time elbows deep in a Remote Desktop Services deployment this week. As part of the effort, I published the RDS RDWeb IIS page with the Azure AD Application Proxy so MFA can be leveraged for remote desktop services.

I had the pleasure of spending a significant amount of time elbows deep in a Remote Desktop Services deployment this week. As part of the effort, I published the RDS RDWeb IIS page with the Azure AD Application Proxy so MFA can be leveraged for remote desktop services.

The Problem

According to the Microsoft Documentation, this should have been a straightforward task (isn’t that always the case!) However, my results were mixed at best. Using the Azure AD Application Proxy to access the site caused slow loading pages, broken image links and sometimes the page would give the error “This corporate app can’t be accessed. If you continue to get this error, contact your IT department.”

The status code indicated a gateway timeout and to check the Application Proxy Connector Event Log for reported errors, so that’s what I did. I noticed a lot of Event ID: 13006 Warnings in the AadApplicationProxy Connector event log with errors that client and backend URL’s were not reachable. Opening links from the web server would sometimes work and other time not. However, I had no problem accessing any of these pages from a corporate workstation.

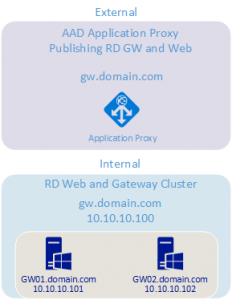

First step is to review my configuration to determine how traffic is flowing. Below are the servers involved in the RDP deployment. I’m limiting my details to the RDWeb servers as this is really a Web server and Application Proxy issue, not specific to RDS.

GW.dowmain.com RDWeb Azure load balancer

GW01.domain.com RDWeb and RD Gateway, AAD Proxy Connector

GW02.domain.com RDWeb and RD Gateway, AAD Proxy Connector

Here is the expected flow as the user signs into the application externally:

Sign into Azure AD Application Proxy via O365

AAD App Proxy connects to the connector service inside the corporate network

The connector service redirects to the Load Balanced resource

The load balancer redirects to one of the two Gateway servers

The AAD App Proxy redirects user to the web page.

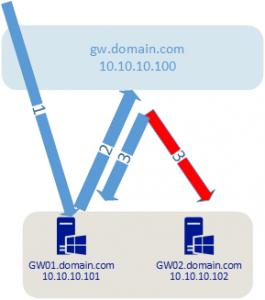

However, this flow was skewed as the AAD Proxy connector is running on the RDWeb servers behind the load balancer, attempting to access the load balanced resource. The illustration below shows what was happening.

1) Inbound connection to the AAD Application Proxy Connection service

2) Connection service accesses load balanced resource from the load balanced resource

3) Things get all jacked up

It appears that the load balancer was not able to keep accurate session data as the session was coming from behind the load balancer. In hindsight, it makes sense that this would cause an issue. It also explains why I saw issues accessing URL’s from the web server, but not from a client workstation.

The Solution

So, how to fix it? The first (and recommended) option is to put the Azure AD Proxy connector service on a server external to the load balancer. This will provide correct session flow that keeps the load balanced traffic as it was intended.

The second option (and the one I tested with) is to update the local Host file on the RDWeb servers to point the LB DNS name (GW.domain.com) to the hosts local IP address. This way, the Azure AD Proxy connector service will keep all traffic for the load balanced URL on the local machine. This will work fine until there is a problem with the local web server but the connector keeps running. This solution overrides the intended purpose of the load balancer. Best to go with the first option in production.

3 thoughts on “Azure AD Application Proxy and IIS”

How do you recommend putting the servers with Azure Proxy connector installed external to the load balancer?

how did you configure the Azure load balancer – with or without session persistence? If it weren’t for the load balancing done by the connectors, i’d definitely have it on. But I did what you suggest, I have 4 gateways, and setup (2) dedicated “proxy connector” servers that ate totally separated from the rdweb and gateway functions. With session persistence turned on, the load balancing doesnt work, and I see 2 gateways with a lot of sessions, and 2 with only a few.

I considered removing the gateway load balancer, putting wild card certs with SANS for each gateway node, and then using round robin so I have a name that I can set for the gateway in the RDS configuration. the connector would do the load balancing and (allegedly) not send traffic to a non-functional gateway node.

Since I alreaday have wildcarad certs that include the node names, I could make the change easily, and nearly seamlessly by just adding the additional IP addresses to the current Gateway DNS name, , and then remove the Load Balanced IP from the DNS name.

Thoughts?

I have session persistence turned off. With persistence turned on, it could appear that the source is the proxy connectors and the load balancer will always direct the traffic to the same RDGateway. Round robin DNS could also work. My concern with round robin is how traffic is directed to resources when they are not available. Some applications will try additional hosts if one is unavailable, not sure how the proxy connectors will work. Should be fairly easy to test though.